Proxies for Web Scraping: How They Empower Data Gathering and Analysis

Web scraping has become an integral part of data collection and analysis across various industries. However, as websites implement measures to limit scraping, web scraping enthusiasts have turned to proxies as an essential tool for overcoming these challenges. In this article, we will dive into the world of proxies for web scraping, highlighting their significance, advantages, disadvantages, and providing you with tips and advice to utilize them effectively.

What are proxies?

Proxies act as intermediaries between a user and the internet. When accessing a website without using a proxy, the website recognizes your IP address, making it easier to track your activities. However, by implementing a proxy server, you can mask your IP address and make requests to websites through various IP addresses, thus bypassing limitations and maintaining your anonymity.

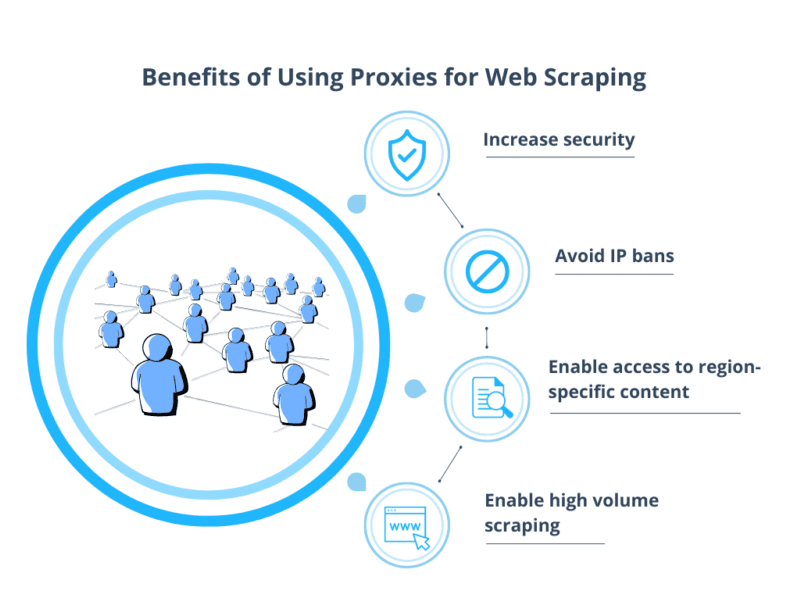

The Benefits of Using Proxies for Web Scraping

Enhanced Anonymity: Using proxies ensures that your web scraping activities cannot be traced back to your original IP address. This anonymity allows you to collect data without worrying about potential repercussions or being blocked by websites.

Geographical Flexibility: Proxies enable users to choose IP addresses from different locations, granting access to regionally-restricted websites or data. Bypassing these geographical limitations expands your data collection scope and provides diverse insights.

Avoiding IP Blocking: Websites often limit the number of requests from a single IP address in a given timeframe, which can hinder data collection. Proxies help evade these limitations by rotating IP addresses, allowing you to scrape a website without risking an IP ban.

Dynamic Prices and Discounts: Proxies enable e-commerce researchers to obtain real-time information on pricing, discounts, and promotional offers from an array of IP addresses. This data can be analyzed to optimize pricing strategies, identify market trends, and guide decision-making processes.

Increased Speed and Efficiency: By utilizing proxies, web scraping activities can be distributed across multiple IP addresses, enhancing the speed and efficiency of data gathering. Scraping larger quantities of data in less time ensures a competitive edge in data-driven analyses.

The Downside of Proxies for Web Scraping

Risk of Proxy Blacklisting: While proxies offer a shield of anonymity, if other users abuse the same proxy server, it can lead to blacklisting. Once an IP address is blacklisted, scraping becomes challenging and may compromise data integrity.

Proxy Costs: Depending on the quality and type of proxies, costs can vary. High-quality residential proxies tend to be more expensive than datacenter proxies. Weighing the cost-effectiveness of the proxies and the value of the scraped data is crucial.

Slower Connection Speeds: Proxies may sometimes result in slower connection speeds compared to a direct internet connection. This can impact the speed at which data is gathered, making it important to select reliable proxy providers.

Best Practices for Efficient Proxy Usage

Choose the Right Proxy Provider: Thoroughly research and select a reputable proxy provider that offers a variety of proxies and is known for their reliability, customer service, anti-detection capabilities, and affordable pricing plans.

Use Rotating Proxies: Rotating proxies automatically switch between different IP addresses, preventing websites from detecting and blocking excessive requests from a single IP.

IP Rotation Frequency: Adjust the frequency of IP rotation based on website restrictions and requirements. Avoid excessive requests that might lead to an IP ban while maintaining sufficient data collection pace.

Utilize Proxy Management Tools: Proxy management tools streamline the process by handling IP rotation, data extraction, and mitigating proxy-related issues. These tools, such as ProxyMesh or ScrapingBee, can be integrated into your scraping workflow to maximize efficiency.

Additional Tips and Tricks for Successful Web Scraping

Avoid Captcha Challenges: Websites often deploy Captcha measures to identify and block bot-like activities. Utilize tools like CAPTCHA solving services or implement techniques like headless browsing to navigate past these obstacles.

Respect Web Scraping Etiquette: Stay within legal boundaries, respect website terms of service, moderate scraping speed, and avoid excessive requests. Being ethical in your instant data scraper practices ensures a sustainable and long-term data gathering strategy.

Overcoming Limitations: Proxy FAQs

Are proxies legal for web scraping?

While web scraping legality varies by jurisdiction and website, using proxies itself is legal. However, it is essential to respect website terms of service and avoid prohibited activities.

How do residential proxies differ from datacenter proxies?

Residential proxies are IP addresses provided by Internet Service Providers (ISPs), mimicking real users. Datacenter proxies, on the other hand, are IP addresses provided by a data center, often associated with higher speed and lower costs.

Do all websites detect and block proxy activity?

Some websites employ detection mechanisms to identify and block proxy usage. To overcome this, rotating proxies or utilizing proxy providers with advanced anti-detection capabilities can help.

Proxies have become an indispensable tool for web scraping, empowering data gathering and analysis in numerous industries. Their ability to provide anonymity, bypass limitations, and ensure efficient and ethical scraping practices makes them a go-to solution for data enthusiasts. By understanding the pros and cons, following best practices, and employing advanced techniques, you can harness the power of proxies to enhance your web scraping endeavors and gain valuable insights.